Across many enterprises, a Voice AI pilot dazzles in a demo, then quietly stalls when asked to handle real call volumes, legacy telephony, and strict compliance rules. The gap between a neat proof of concept and a production grade Enterprise Voice AI Deployment is where CX strategies often lose momentum.

For CX leaders, digital transformation teams, and enterprise architects, the challenge is no longer if conversational AI works. The challenge is how to scale it across complex contact center stacks, multiple regions, and converged voice plus chat journeys, without putting service levels or risk posture at stake.

This practical guide breaks down what an enterprise deployment really entails, far beyond a single bot. You will see how architecture, orchestration, integrations, security, and operations fit together, and how to move from pilot to resilient production with confidence.

AI Readiness Maturity Scorecard

Use this scorecard to:

- Assess your organization’s current readiness across strategy, data, technology, people, and governance

- Identify capability gaps that could limit the success of AI and automation initiatives

- Evaluate alignment between business objectives, operating models, and AI adoption plans

- Benchmark maturity across key dimensions required for scalable AI transformation

- Prioritize investments needed to move from experimentation to enterprise-wide AI impact

- Build a clear, actionable roadmap for advancing AI readiness with measurable milestones

Why Scaling Voice AI Is Hard

Voice AI is deceptively simple in a lab. A few intents, a small call sample, and one contact number can produce impressive demos. The real complexity appears when the same solution must live inside an enterprise telephony and contact center ecosystem, handle peak season loads, and satisfy internal risk teams.

Several pressures converge at once:

- Fragmented telephony and contact center stacks. Many enterprises run a mix of on premises PBX, SBCs, SIP trunks, legacy IVR, and one or more CCaaS platforms. A scalable Enterprise Voice AI Deployment must co exist with this estate, preserve routing logic, support call recording, and respect existing failover paths.

- Real time latency and uptime expectations. Customers notice even small delays on a live call. End to end latency has to stay within a few hundred milliseconds across ASR, NLU, orchestration, and TTS, while also meeting stringent availability targets. Guidance from cloud architecture frameworks such as the AWS Well Architected Framework highlights how many layers contribute to reliability.

- Governance, risk, and compliance. Voice interactions contain some of the most sensitive customer data. Risk, legal, and privacy teams need clear controls for recording, storage, access, and automated decisions. Frameworks like the NIST Privacy Framework set expectations for data governance that pilots rarely address.

- Consistency across voice and chat. Customers expect one coherent brand, not a smart chat experience and a basic IVR. That means intent models, knowledge, and policies must be aligned across channels to support converged experiences.

In short, scaling fails when teams treat Voice AI as an isolated project instead of an integral part of the enterprise CX and technology architecture.

Core Architecture And Components

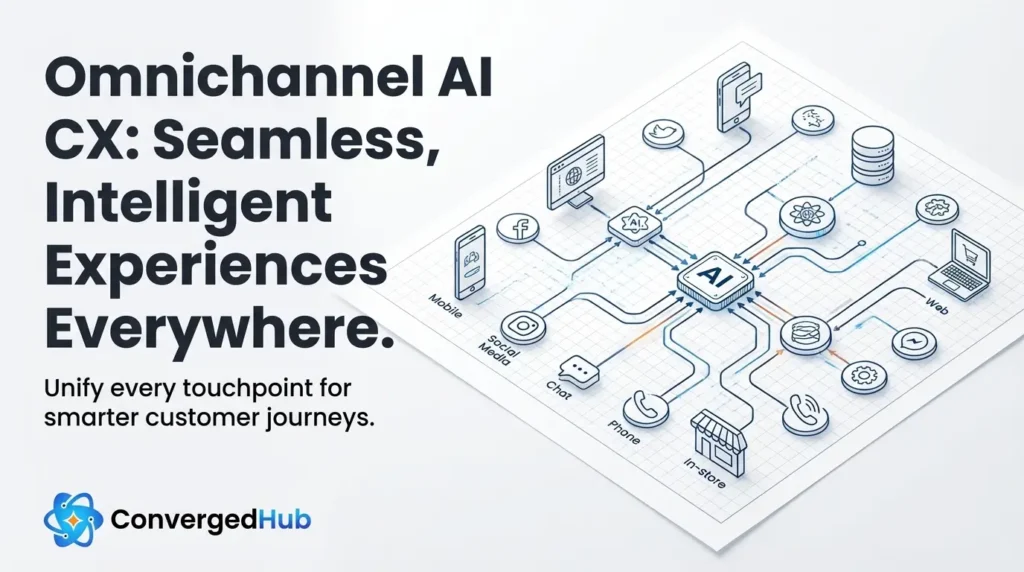

A robust Enterprise Voice AI Deployment is an ecosystem, not a single model. It combines network, speech, language, orchestration, and business systems into one coordinated flow.

At a high level, the stack includes:

- Telephony and SBCs. Session Border Controllers, SIP trunks, and call routing engines connect the public telephone network to your contact center. The Voice AI application needs secure, low latency access at this layer, while still honoring routing, call recording, and compliance policies.

- Automatic Speech Recognition (ASR). Streaming ASR converts audio into text in real time. For production, you need support for multiple languages and accents, custom vocabularies for industry terms, and strong word level timestamps for analytics.

- Natural Language Understanding (NLU). NLU maps utterances to intents, entities, and sentiment. This may be a traditional intent classifier, a large language model, or a hybrid approach. Accuracy must hold up under noisy real world audio and unexpected phrasing.

- Dialogue management and orchestration. An orchestration layer coordinates the conversation, external calls, error handling, and escalation logic. It decides when to ask clarifying questions, when to call APIs, and when to hand over to a human agent.

- Business system integrations. Voice AI only feels intelligent when it can see customer context and act on it. This means real time integrations with CRM, ticketing, order management, billing, and authentication services via APIs and event streams.

- Text To Speech (TTS). High quality, low latency TTS delivers natural responses. Enterprises often require multiple voices for different brands or regions, and may prefer neural voices with appropriate controls for tone.

- Analytics, logging, and observability. Every call generates logs, transcripts, and metrics. A mature deployment uses these for quality assurance, model improvement, root cause analysis, and compliance reporting.

Cloud providers and architecture guides, such as the reference material in Google Cloud Architecture Center, provide patterns for building modular, resilient services that you can adapt to your Voice AI stack.

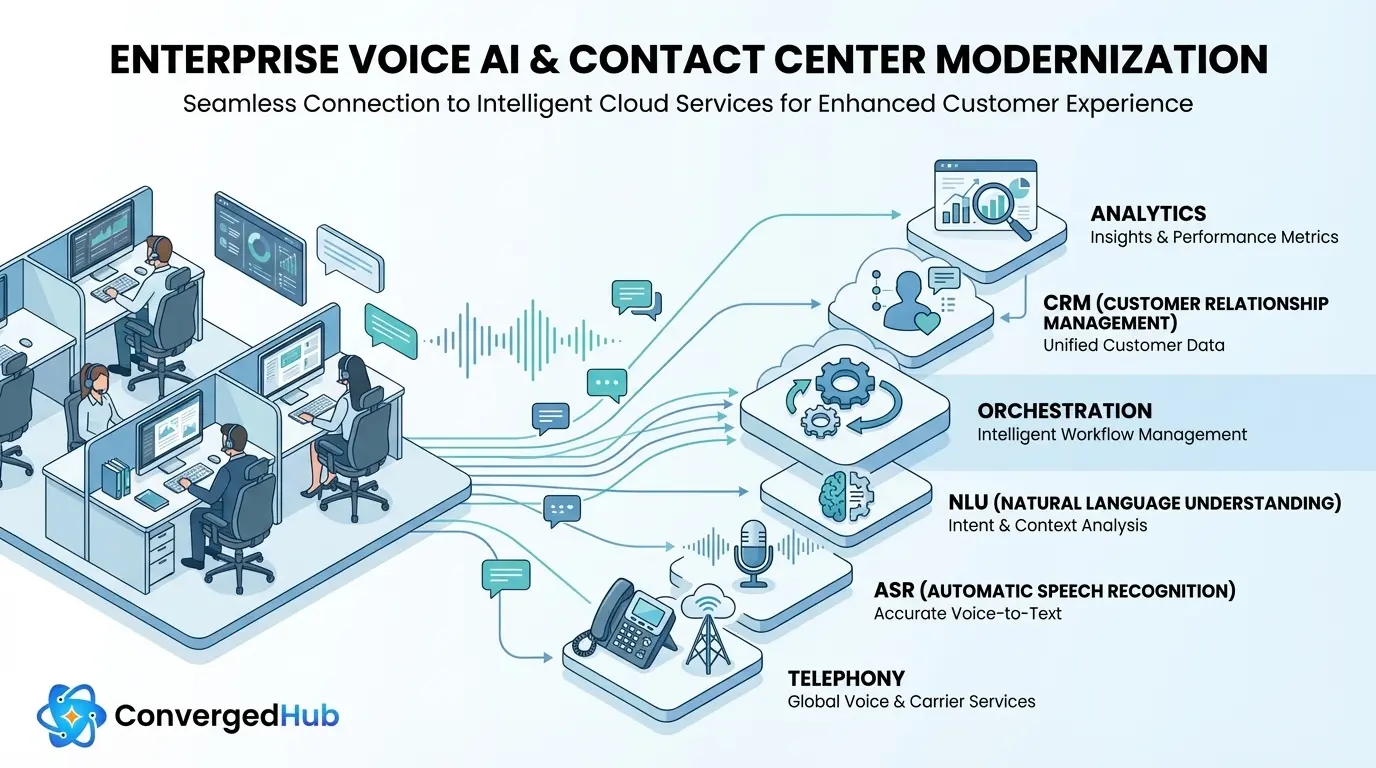

Choosing The Right Deployment Model

Deployment choices are strategic, not only technical. Where your Voice AI platform runs determines latency, data residency, control, and upgrade flexibility.

Most enterprises converge on one of three patterns:

- Cloud native. Voice AI services run in public cloud regions close to your main customer bases. This model offers rapid innovation, elastic scaling, and easier global rollouts. Data residency is handled by selecting appropriate regions and designing storage policies that match regulations like the EU General Data Protection Regulation. The trade off is less control over underlying infrastructure.

- Hybrid. Latency sensitive or regulated components such as call recording and certain logs stay on premises or in a private cloud, while ASR, NLU, and orchestration run in public cloud. SBCs bridge the environments securely. This pattern is common in financial services and healthcare, where some data must never leave specific networks or countries.

- On premises. Everything runs inside your data centers. This offers maximum control and can simplify some regulatory approvals but shifts full responsibility for capacity, patching, and resilience to internal teams. Innovation cycles can slow significantly without a clear platform strategy.

Latency, data residency, and operational maturity should guide the decision, not habit. Many organizations start hybrid, then move more workloads to cloud as security and governance patterns mature.

Integrations Across The CX Stack

Voice AI value comes from what it can see and do inside your enterprise. That hinges on deep, secure integrations across the CX and operations stack.

Key integration domains include:

- CRM and customer data platforms. Real time access to profiles, contracts, orders, and previous interactions allows the Voice AI to personalize experiences and avoid repetitive questions. Updates such as call outcomes and case notes should flow back into CRM automatically via well designed APIs.

- Contact center platforms. Integrations with CCaaS or on premises platforms control call routing, skills, queues, and screen pops. When the bot needs to transfer, it should pass context, transcripts, and disposition codes so agents can continue seamlessly.

- Workforce management (WFM). Call volumes, handle times, and containment rates feed WFM systems, improving forecasts and staffing decisions. Over time, Voice AI itself can be scheduled as a virtual queue resource.

- Quality assurance and compliance tools. Transcripts, sentiment scores, and detected issues like potential policy violations can be shared with QA systems for scoring and coaching.

- Knowledge bases and content systems. Modern bots often use retrieval augmented techniques to query enterprise knowledge bases. Ensuring a single source of truth across voice and chat avoids conflicting answers.

Technically, these connections rely on REST or gRPC APIs, webhooks, and event streams. Design guidance from resources such as the Google API Design Guide can help ensure integrations are secure, versioned, and resilient to failures.

Secure authentication and authorization, typically via OAuth 2, OpenID Connect, and enterprise identity providers, is essential. Role based access should define which services can read or write specific data fields to maintain least privilege access.

From Pilot To Production At Scale

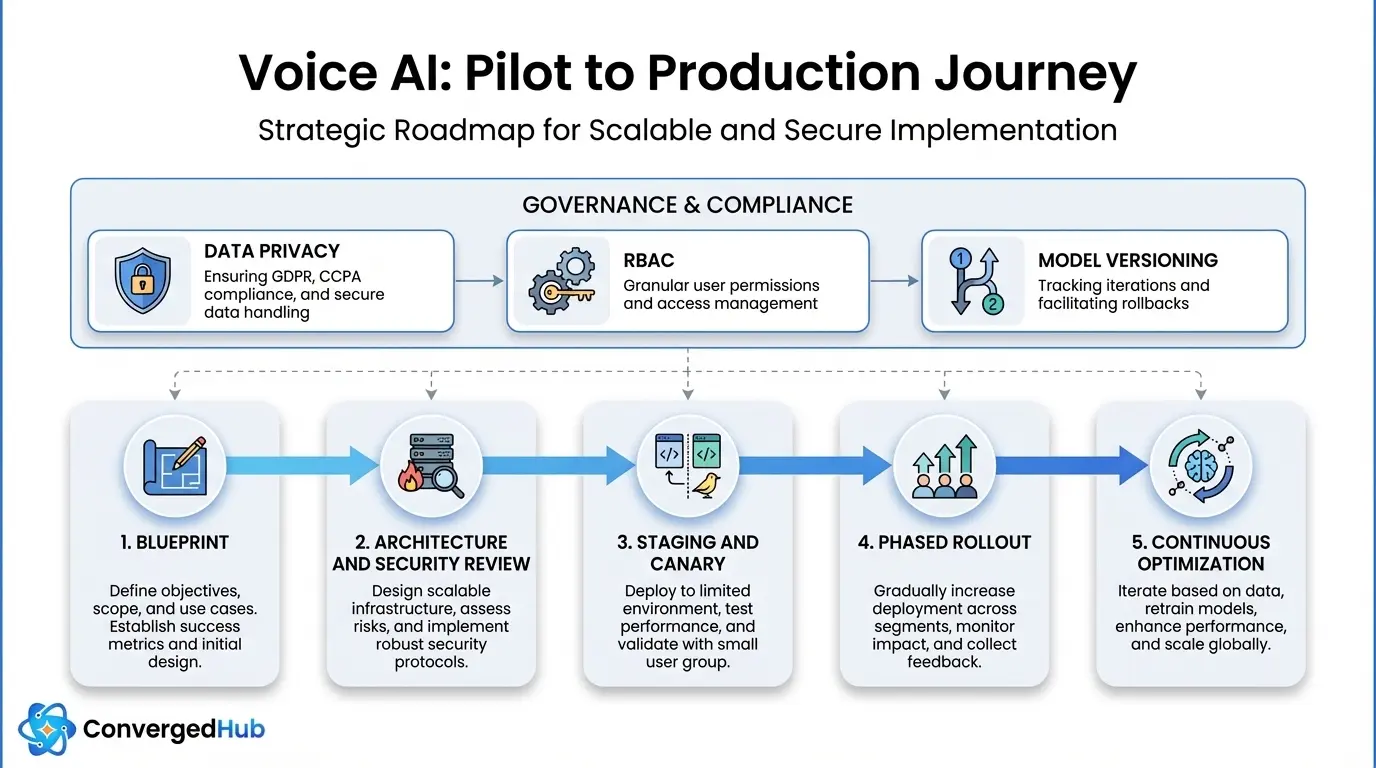

Moving from a promising pilot to a production grade Enterprise Voice AI Deployment is best treated as a structured program, not a big bang launch. A repeatable path typically includes these stages:

- Blueprint and success metrics. Map top call drivers, segment journeys by value and risk, and choose use cases that balance business impact with complexity. Define quantitative goals such as target containment, average handle time reduction, and CSAT improvement before expanding beyond pilot.

- Architecture and security review. Work with enterprise architects, security, and network teams to validate the end to end design. Threat model data flows, define encryption in transit and at rest, and standardize secrets management. The NIST cryptographic standards guidance is a useful reference when aligning on algorithms and key management practices.

- Staging, load, and canary tests. Stand up a staging environment that mirrors production telephony as closely as possible. Run synthetic load tests that simulate peak call patterns, then start with canary releases that send a small percentage of traffic to the Voice AI to validate quality and stability.

- Phased rollout with graceful fallback. Increase coverage gradually by business line, region, or call type. Always provide clear escape hatches to human agents and design failover logic if any upstream dependency is impaired. Customers should never experience dead ends due to an AI outage.

- Reliability engineering and tuning. Based on early production data, tune ASR vocabularies, NLU models, prompts, and call flows. Implement auto scaling rules, caching strategies, and connection pooling to support peak hours without degradation. Practices from site reliability engineering, as documented in the Google SRE workbook, adapt well to Voice AI traffic.

This approach allows teams to learn quickly while keeping risk controlled, and it builds organizational confidence that Voice AI can handle mission critical workloads.

Governance, Operations And Change

Technology is only half of a successful Enterprise Voice AI Deployment. Long term success depends on governance, operational excellence, and change management across the organization.

Governance and compliance

- Data classification and retention. Classify transcripts, audio, and derived analytics according to internal data policies. Define retention periods, anonymization or redaction rules, and data residency for each class of data.

- Consent and transparency. Provide clear notices when customers interact with automated systems, especially when calls are recorded or used for training models. Privacy frameworks such as the NIST Privacy Framework can guide policy design.

- Role based access and approvals. Limit who can publish changes to call flows, prompts, or models. Introduce change control processes with testing and sign off, similar to production software releases.

- Model lifecycle management. Track versions of NLU models, prompts, and policies. Maintain rollback paths and use champion challenger approaches when testing new models.

Operations and continuous optimization

- Stand up a Voice AI operations function with clear on call rotations, runbooks, and dashboards.

- Monitor metrics such as containment rate, transfer reasons, error codes, NLU confidence, latency, abandonment, and CSAT. Use these to prioritize improvements and identify regression early.

- Feed labeled interactions back into training pipelines to improve recognition and intent coverage over time.

Organizational alignment and change

- Define a RACI model that clarifies ownership across CX, IT, operations, security, and product teams.

- Prepare agents and supervisors with training on how Voice AI changes call patterns, what they should expect to see in transfers, and how to use any agent assist capabilities.

- Communicate openly about goals and impact so teams see Voice AI as augmentation of service, not an opaque black box.

- Avoid fragmented deployments by establishing a central conversational AI center of excellence that sets standards and shares learnings.

With this foundation, enterprises can scale Voice AI across products, regions, and channels while maintaining control, compliance, and customer trust.

Enterprise Voice AI Deployment at scale isn not about chasing the latest model. It is about designing an architecture, operating model, and governance structure that can support continuous change across voice and chat.

By treating Voice AI as a core CX platform, aligning it with telephony and contact center strategy, and investing in robust integrations and operations, CX and digital leaders can move beyond pilots to stable, converged experiences that improve outcomes for customers, agents, and the business.