Your best agent can deliver a textbook save, yet walk into coaching with a low QA score because a different reviewer, shift, or channel held them to a different standard. Multiply that by thousands of interactions across voice and chat, and scoring bias silently erodes trust, CSAT, and transformation momentum.

AI QA Calibration is how CX and Digital Transformation leaders stop this. Think of it as an always on metronome that keeps models, human reviewers, and rubrics in sync so the same interaction earns the same score, regardless of channel, language, accent, intent complexity, or time of day.

This playbook unpacks how to design a golden calibration set, measure inter rater reliability and bias, govern human in the loop workflows, and roll out explainable AI scoring at enterprise scale. The goal is simple but powerful: fair, defensible QA that accelerates coaching, compliance, and converged experiences across every customer touchpoint.

AI Readiness Maturity Scorecard

Use this scorecard to:

- Assess your organization’s current readiness across strategy, data, technology, people, and governance

- Identify capability gaps that could limit the success of AI and automation initiatives

- Evaluate alignment between business objectives, operating models, and AI adoption plans

- Benchmark maturity across key dimensions required for scalable AI transformation

- Prioritize investments needed to move from experimentation to enterprise-wide AI impact

- Build a clear, actionable roadmap for advancing AI readiness with measurable milestones

The cost of QA bias

Most contact centers assume QA bias is a minor annoyance. In reality, it is a silent tax on every transformation initiative you run.

When two identical conversations receive different scores, several things happen:

- Agents disengage: Coaching feels arbitrary, so top performers stop trusting feedback.

- Supervisors waste time: Debates about scores replace data driven skill building.

- Leaders fly blind: Queue, bot, or policy decisions rest on noisy QA signals.

- Compliance risk grows: Regulators care about consistent controls, not your intentions.

The shift to digital and AI only amplifies the problem. As McKinsey research on AI powered contact centers highlights, interactions are fragmenting across channels and automation layers. Without a unified, calibrated approach, voice calls, chats, and AI assisted conversations will be judged by different yardsticks.

For CX and Innovation leaders, biased QA does not just hurt fairness. It slows down everything from new bot launches to policy changes, because you cannot reliably tell whether performance deltas reflect customer reality or reviewer preference.

What is AI QA Calibration

AI QA Calibration is a structured, recurring process that aligns models, human reviewers, and rubrics so the same interaction receives the same score, every time.

It goes beyond classic calibration where a few supervisors review a handful of calls each month. Instead, AI QA Calibration treats fairness as a continuous system with three moving parts:

- One converged rubric that defines behavioral, procedural, and compliance standards across voice, chat, and messaging, with channel aware examples.

- AI models that apply that rubric at scale, with balanced sampling and score normalization to avoid channel or queue bias.

- Human in the loop workflows that routinely test, challenge, and refine model judgments.

In practice, this means building explainable scoring models that surface transcript citations for each sub score, running monthly calibration ceremonies, and versioning rubrics so changes are auditable.

Done well, AI QA Calibration turns QA from a subjective policing function into a reliable measurement backbone that supports converged experiences and data driven coaching across the entire customer journey.

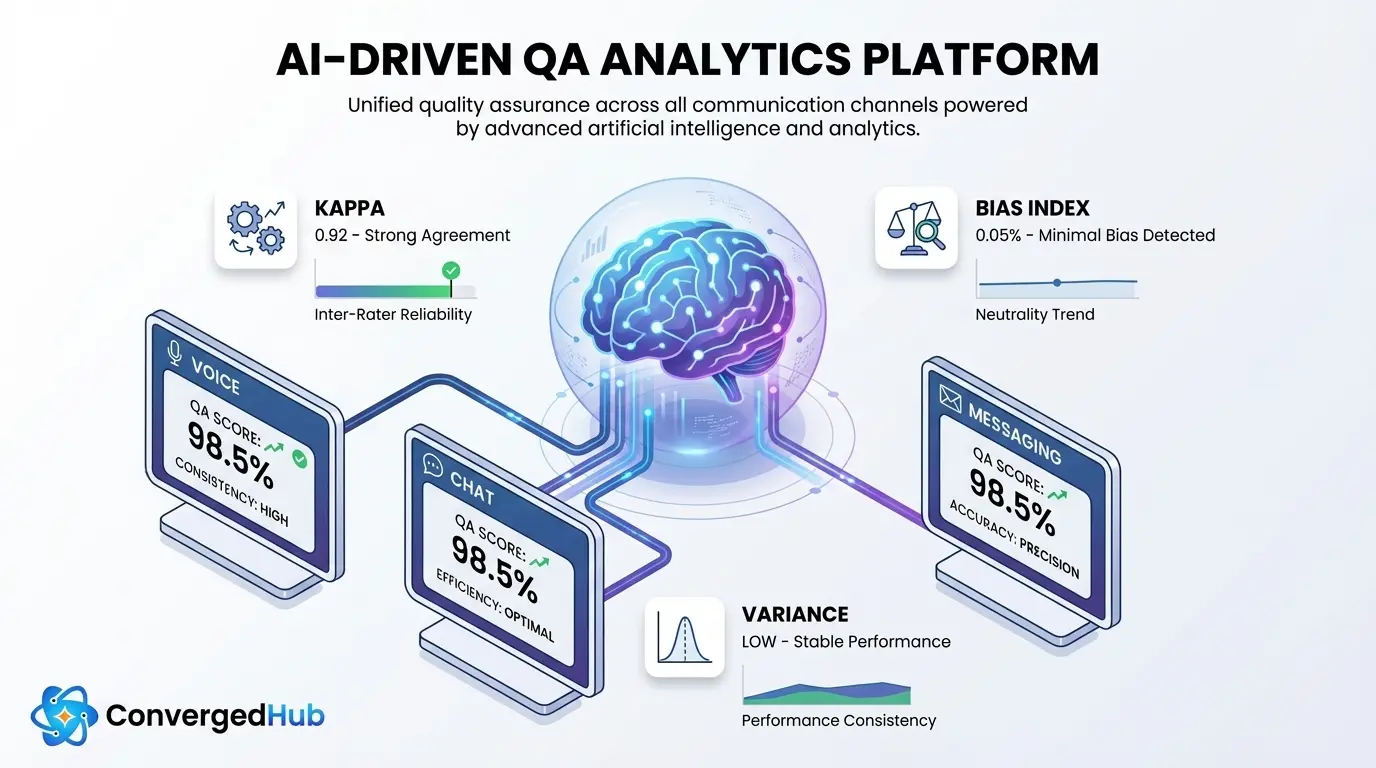

Designing a golden set

The heart of AI QA Calibration is your golden calibration set a carefully curated collection of interactions that represent the complexity and risk profile of your operation.

For enterprise scale CX, a strong golden set typically includes:

- High risk intents: cancellations, complaints, regulatory disclosures, financial advice, collections, vulnerability flags, fraud alerts, and any scenario that can create legal or brand risk.

- Channel and queue diversity: inbound and outbound voice, live chat, messaging, social DMs, and specialist queues like retention or technical support.

- Multilingual and accent coverage: major languages you support, plus regional accents and code switching to ensure the model handles real world speech and phrasing.

- Edge cases: long silences, overlapping speakers, noisy audio, sarcasm, customers switching channels mid journey, and bot to agent handoffs.

Start with a few hundred interactions, then grow as you expand coverage. Each interaction should be fully anonymized and handled under your privacy guidelines. Frameworks like the NIST guidance on responsible AI and privacy by design principles are useful references.

Crucially, every golden set interaction is scored by multiple human reviewers using a double blind process and your current rubric. Their consensus becomes the benchmark truth that both humans and models are calibrated against.

Measuring fairness and drift

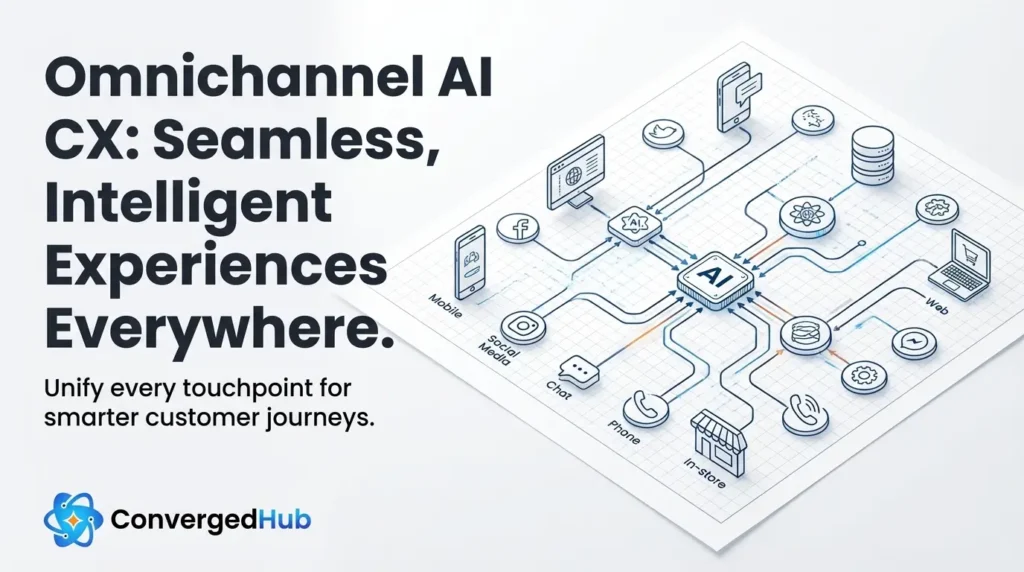

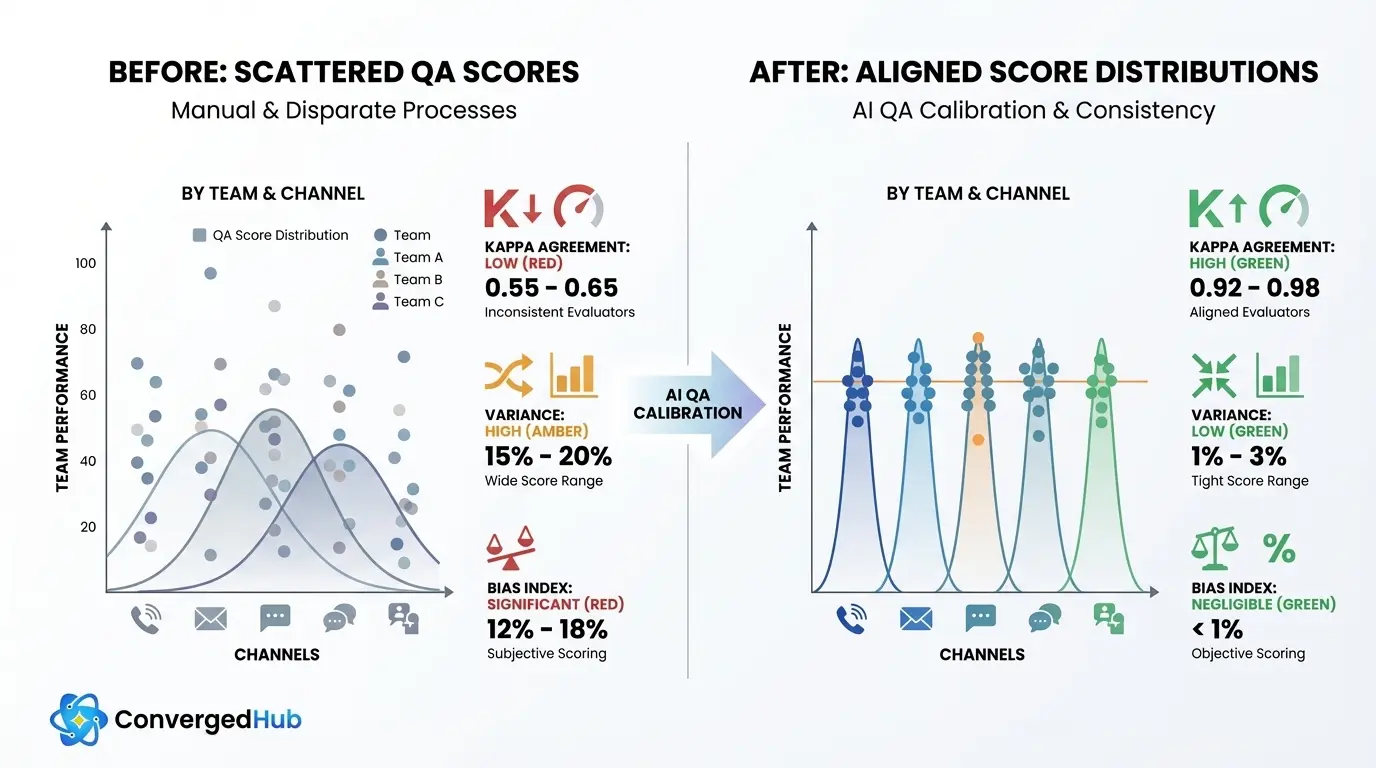

Once your golden set is in place, the next step is to quantify how consistent and fair your QA really is. This is where AI QA Calibration becomes science, not opinion.

Key measures include:

- Inter rater reliability: Use statistics like Cohen kappa to measure agreement between human reviewers, and between AI and the human consensus. For enterprise QA, targeting kappa greater than 0.7 is a practical threshold.

- Variance across channels and queues: Track average scores, distributions, and standard deviations by channel, intent, queue, and time of day. Calibration should drive variance reduction rather than convergence toward easy middle scores.

- Bias indices: Define bias indices as the difference in average scores between groups (teams, regions, languages, tenure bands) after controlling for performance indicators. Aim for bias index below 5 percent as a working target.

These metrics power dashboards that show whether your models are over penalizing certain accents, queues, or complex intents. They also help you tune thresholds and normalize scores so a 90 on chat means the same quality level as a 90 on voice.

Over time, track model drift by comparing AI scores on new interactions against a small, rotating sample that humans rescore each month.

Governance with humans in loop

Even the best models require disciplined human governance. Without it, AI QA risks scaling bias instead of reducing it. Strong AI QA Calibration programs build clear, repeatable rituals.

Consider the following practices:

- Double blind reviews: At least two reviewers score each golden set interaction without seeing each other scores or the AI score. This prevents anchoring and exposes rubric ambiguities.

- Monthly calibration ceremonies: Cross functional sessions where QA, operations, training, and compliance teams review outlier cases, resolve disagreements, and document rubric updates.

- Arbitration and escalation paths: Clear rules for when a third reviewer or QA leader makes the final call, especially on high risk or disputed interactions.

- Versioned rubrics: Semantic versioning of rubrics and scoring models, with release notes that explain what changed and why. This supports auditability and experimentation.

Explainability is critical. Each AI score should come with transparent rationales and transcript snippets that show which utterances drove each sub score. Resources like MIT Sloan guidance on reducing AI bias provide useful design principles for transparency.

When agents and supervisors can see both the score and the evidence, QA becomes a trusted coaching partner, not a black box.

Rolling out AI QA at scale

Moving from pilot to enterprise wide AI QA Calibration isn not a big bang project. It is an iterative rollout that deliberately manages risk and trust.A pragmatic path looks like this:

- Pilot one queue: Choose a contained but important area, such as a single voice or chat queue with clear intents and good data quality.

- Run side by side: For several weeks, score the same interactions with both human QA and AI models. Compare deltas by dimension and track kappa, variance, and bias indices.

- Set pass or fail gates: Proceed only when you consistently hit your targets, for example kappa greater than 0.7 and bias indices under 5 percent across key segments.

- Automate the routine: Let AI handle most volume while humans focus on calibration samples, high risk intents, and coaching follow up.

- Expand coverage: Add new queues, languages, and channels, keeping one converged rubric but enabling channel aware features where needed.

Along the way, track KPIs such as QA hours saved, coaching uplift (improved agent scores after feedback), CSAT and NPS improvements, and first contact resolution gains. Also monitor watch outs like overfitting to a static golden set, harmful proxy variables, and privacy gaps.

For converged platforms that span voice and chat, AI QA Calibration becomes the backbone that keeps customer experience aligned, fair, and continuously improving.

As AI, automation, and converged experiences reshape service, the question is no longer whether you can score every interaction but whether you can score them fairly.

AI QA Calibration gives CX and Digital Transformation leaders a disciplined way to align humans, models, and rubrics so QA becomes a trusted source of truth. With a golden calibration set, measurable fairness targets, and human in the loop governance, you can end scoring bias, accelerate coaching, and unlock enterprise level insight from every conversation.

The organizations that invest in this discipline today will be the ones that turn AI QA from a compliance checkbox into a strategic advantage.