Every CX leader in a hospital, bank, or public agency is hearing the same pitch right now: generative AI can handle most of your calls, slash wait times, and delight customers. For regulated industries, that promise comes with a quiet second line that is far more important: do this wrong and you may be explaining an incident to regulators and the front page of the news.

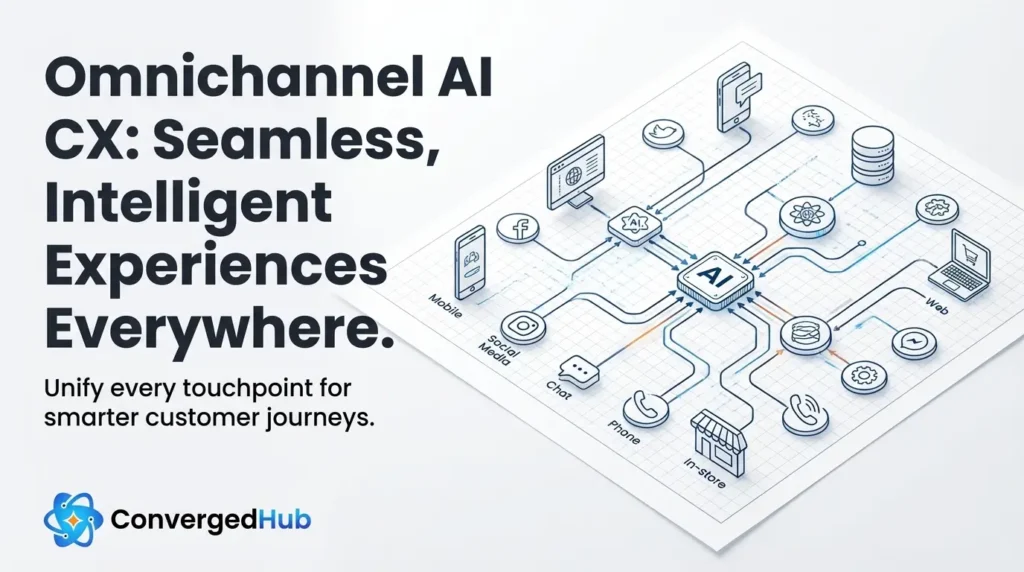

AI Based Customer Support is no longer limited to simple chatbots. Modern platforms orchestrate the same intelligence across voice, chat, messaging, and even agent desktops, creating converged experiences where a single conversation can move fluidly between channels. In healthcare, finance, and the public sector, that convergence amplifies both value and risk, because sensitive data now flows through a unified AI layer at scale.

Regulation is not just a compliance checklist for these industries; it defines the boundaries of what trustworthy service looks like. Frameworks such as HIPAA, PCI DSS, and GDPR already describe exactly how personal data may be collected, processed, retained, and audited. The question for CX and digital transformation leaders is how to align AI Based Customer Support with those rules without strangling innovation.

This article maps the capabilities of AI Based Customer Support to the concrete expectations of HIPAA, PCI DSS, and GDPR, with a focus on consent management, data minimization, encryption, audit logging, and explainable decisions. Along the way, it highlights design patterns like real time redaction and human in the loop controls that allow you to automate safely, not just quickly.

If you approach these requirements as a late stage legal review, AI will stall in proof of concept. Treated as design inputs, however, they become a blueprint for differentiated service: faster, more personal, and explicitly trustworthy. That is the opportunity for CX and innovation leaders in regulated environments.

Conversational Voice AI – Value Estimator

Quantify the business impact of Conversational Voice AI in minutes.

Use this estimator to:

- Build a data-backed ROI narrative to support executive and board-level decision-making

- Model potential cost savings driven by Voice AI–led call automation and containment

- Quantify productivity gains from reduced agent workload and lower average handle time

- Assess operational efficiency improvements across high-volume voice interactions

Why Compliance Must Lead AI

In many consumer sectors, teams launch AI pilots with a mindset of move fast and fix governance later. Regulated organizations do not get that luxury. A hospital, retail bank, or revenue authority must assume that every automated conversation may be subject to legal discovery, regulatory audit, or public records requests. When AI answers the phone, compliance is already in the room.

The risk profile also looks different once AI connects directly to patients, account holders, taxpayers, or benefit recipients. Misrouted data, ambiguous consent flows, or a hallucinated answer are not just customer experience gaps; they can be interpreted as unauthorized disclosure, unfair treatment, or failure to follow documented procedures.

- Silent capture of sensitive data in training logs or analytics tools

- Agents relying on black box AI suggestions with no record of rationale

- Unclear separation between PCI scoped payment flows and general conversations

Regulators are increasingly explicit that artificial intelligence must follow the same underlying principles as any other processing of personal data. The NIST AI Risk Management Framework outlines practices for trustworthy AI, emphasizing governance, data quality, transparency, and human oversight. The United Kingdom Information Commissioners Office offers guidance on artificial intelligence and data protection that reinforces many of the same themes.

For CX and digital leaders, the implication is clear: compliance cannot be a sign off step at the end of an AI program. It needs to shape channel strategy, architecture, and vendor selection from the start. When privacy, security, and risk requirements inform design, AI Based Customer Support can actually simplify compliance by standardizing logic and data flows across voice and digital channels instead of duplicating risk in every system.

Instead of asking how much call volume a bot can deflect, the more strategic question in regulated environments is how AI can help prove that every interaction followed the rules. That reframing turns automation into a compliance asset, not just an operational shortcut.

Map Journeys to Rulesets

The starting point for compliant AI Based Customer Support is not the model; it is a precise map of customer journeys, data elements, and applicable rules. A single inbound call to a healthcare contact center, for example, may cross appointment scheduling, clinical advice, and billing questions, each with different regulatory expectations.

Rather than treating regulation as abstract policy, leading teams link concrete steps in each journey to specific obligations in HIPAA, PCI DSS, and GDPR.

| Scenario | Primary rule set | Implication for AI Based Customer Support |

|---|---|---|

| Patient shares symptoms over voice bot | HIPAA Privacy Rule | Treat transcripts and audio as protected health information, limit access, and log who viewed or exported data. |

| Customer reads card number to pay a fee | PCI DSS | Isolate payment capture in a PCI scoped flow, avoid storing full card data in AI logs, and restrict which systems can access tokens. |

| Citizen updates contact details via chat | GDPR | Collect only necessary fields, record the lawful basis, and respect rights to access, correction, and erasure. |

Once these journeys are sketched, link them to your record of processing activities and data protection impact assessments. For European operations, GDPR expects organizations to document why they process personal data, which systems are involved, and how long information is retained. AI adds another processing layer, so its role must be explicitly captured rather than hidden behind a generic chat or IVR label.

- Catalog every inbound and outbound channel where conversational AI may operate, including agent assist use cases.

- For each journey, list the data fields that may surface, from account identifiers to unstructured notes.

- Associate each combination of journey and data with the relevant rule set and risk rating.

- Decide which parts can be safely automated, which require human review, and where to insert explicit consent steps.

This discipline has a practical payoff. When auditors ask how AI affects compliance with HIPAA, PCI DSS, or GDPR, you can answer with diagrams, data inventories, and policy controls tied to specific journeys, rather than a vague statement that the vendor encrypts everything.

Designing for Digital Consent

In regulated industries, consent is not a pop up; it is a continuous relationship. AI Based Customer Support changes that relationship because the system can infer, remember, and reuse information across channels. That power needs to be constrained by explicit choices that customers understand and can revisit.

Under GDPR, consent is only one of several lawful bases for processing, but all rely on transparency about what data is collected and why. HIPAA differentiates between treatment, payment, and operations and expects covered entities to limit use of protected health information to those purposes. Financial regulators focus on suitability, fairness, and clear disclosure. In each case, the front door to AI must respect these boundaries.

Practically, this means designing consent as part of the conversational flow, not as legal text that users never read. In voice channels, the system can open with a concise explanation that calls may be processed by AI for faster service, that sensitive information will be protected, and that a human agent is available on request. In chat and messaging, short just in time notices can appear the first time the user shares medical, financial, or identity information.

- Layered explanations: Start with a plain language summary and allow users to drill into more detail only if they want it.

- Granular choices: Offer options such as allowing anonymized data for model improvement separately from using data to personalize service.

- Data minimization: Configure prompts, entity extraction, and integrations so the AI collects only what is needed to complete the task.

- Reversible decisions: Give customers simple ways to change preferences or request deletion of AI conversation history where law permits.

Critically, consent and purpose limitations need to be machine readable. That means storing metadata about consent status, channel, timestamp, and applicable policies alongside interaction logs. When regulators or internal auditors review a case, they should be able to see not only what the AI did, but also what authority it had to use each piece of data.

Protect Data at Every Hop

Every AI powered interaction is a potential high bandwidth leak of sensitive information. Voice recordings, chat transcripts, and behavioral signals flow through speech recognition, natural language understanding, orchestration layers, and downstream systems. For regulated industries, secure design means protecting each hop in this path, not just the database at the end.

At a minimum, traffic between clients, channels, and AI services should use strong transport encryption such as TLS 1.2 or higher, with data at rest encrypted using modern algorithms and managed keys. Segment environments that handle regulated workloads, and keep development, testing, and production clearly separated. Payment flows governed by PCI DSS often require isolated network segments or providers that certify compliance with the current standard.

Beyond raw encryption, leading implementations use real time redaction and tokenization to keep sensitive elements out of general AI logs. Speech analytics can strip or mask card numbers, national identifiers, or clinical codes before text is passed to large language models. Tokenization services can replace those values with non sensitive references that only a tightly controlled vault can reverse.

Architecture choices also matter. Many organizations in healthcare and finance favor retrieval augmented generation, where models consult approved knowledge bases and systems of record at runtime rather than training directly on customer data. Combined with strict configuration of any third party foundation models not to retain prompts or responses, this reduces the blast radius of a breach and aligns better with data minimization principles.

Security teams do not need to start from scratch. The Open Worldwide Application Security Project offers a Machine Learning Security Top 10 that highlights common weaknesses, and existing frameworks such as the NIST Cybersecurity Framework can guide identity, access, and logging controls around AI workloads. What matters for CX leaders is that security and architecture decisions are visible and explainable enough to discuss with risk and compliance partners, not hidden inside a vendor black box.

Operationalize Governance and Audit

The most sophisticated encryption strategy does not help if you cannot reconstruct what actually happened in a disputed interaction. Regulators, ombuds teams, and internal investigators will expect you to show not only what a customer said and what the AI replied, but also how decisions were made.

That expectation changes how you design logging and observability for AI Based Customer Support. Traditional call centers already record audio and store basic metadata such as time, queue, and agent. With AI, you also need structured records of prompts, intermediate reasoning steps where feasible, policy checks, data sources consulted, and any escalations to humans.

- Conversation and event logs: Store transcripts, audio references, and state transitions with strong access controls and retention rules aligned to your regulatory obligations.

- Decision tracing: Capture which knowledge sources and business rules influenced each answer, so agents and auditors can see why the system responded a certain way.

- Model and policy versioning: Track which model configuration and policy set was active at the time of each interaction so changes can be correlated with outcomes.

- Performance and fairness metrics: Monitor error rates, escalation patterns, and outcomes for different customer segments to detect potential bias or degradation.

In jurisdictions covered by GDPR and similar laws, customers may have the right to receive meaningful information about automated decisions. That does not require exposing model weights, but it does require the ability to explain factors that influenced outcomes in accessible language. Well designed audit trails give your human agents the context they need to answer such questions with confidence.

Governance capabilities are not only for regulators. They create a feedback loop for product and operations teams: seeing where AI hands off to humans, where it struggles, and where policies are too strict or too lax. Over time, this turns AI Based Customer Support into a learning system that improves both compliance and customer experience.

Putting Humans Back in Control

There is intense pressure to maximize automation rates, but in regulated sectors the more important metric is safe automation. That means building AI services that know their limits and hand control back to humans gracefully when risk, ambiguity, or emotion is high.

Start by classifying intents and scenarios by regulatory and reputational risk. Routine address updates or balance inquiries may be fully automated. Complex medical advice, loan decisions, or benefit eligibility disputes may require human oversight. Between these extremes lies a large middle layer where AI can assist but not decide alone.

- Assisted decision making: AI drafts responses or recommendations that human agents approve, edit, or reject, with the final decision clearly attributed to the human.

- Dynamic escalation: Policies detect trigger phrases, sentiment, or data patterns that indicate higher risk and automatically route to specialized teams.

- Expert review queues: Certain actions, such as changes to clinical records or high value financial transactions, always enter a review queue even if AI collected the data.

Human in the loop is also about redress. Customers need clear paths to challenge or appeal outcomes that involved AI. Agents in turn need tools that show how AI reached a suggestion and how to override it when local context or empathy matters more than statistical patterns.

Finally, technology choices must align with culture. Train frontline staff, supervisors, and compliance teams together so that everyone understands when to rely on AI, when to question it, and how to record decisions. When humans and machines each do what they are best at, AI Based Customer Support becomes a trusted extension of your service, not an opaque replacement.

Healthcare, financial services, and public sector organizations cannot afford to treat conversational AI as a side experiment on the edge of the contact center. The same systems that promise faster answers are now part of the regulated core of customer interaction, and they must stand up to scrutiny from security teams, regulators, and the public.

The path forward is not to wait on the sidelines until rules are perfectly clear. It is to build AI Based Customer Support that makes compliance visible and testable: journeys mapped to rule sets, consent embedded in conversations, data minimized and protected at every hop, audit trails that show how decisions were made, and humans ready to step in where risk is highest.

For CX and digital transformation leaders, that approach turns AI from a source of anxiety into a strategic advantage. When customers and regulators see that your organization can innovate without compromising privacy or fairness, they gain something scarce in a noisy market: confidence that automation is working for them, not on them.